If you’ve used any online tools or apps for work, relied on advanced technology for everyday efficiency, or even scrolled your social media feed recently – chances are you’ve interacted with AI.

While we are still understanding the depths and potential new artificial intelligence phenomenon has on our society, work, and home life, there’s one thing we know for sure: AI is not going away.

According to Europol 2022, experts estimate that as much as 90% of online content can be artificially generated by 2026.

Many forms of AI have become part of our everyday household or work-life routine, and there are many more evolvements of artificial intelligence to come.

Like any other new innovation suddenly implemented into our society (remember life before social media?), we must be careful how we move forward with an advanced tool as powerful as AI.

In a short matter of time, it’s become a helpful system for many to create content and access information or data to their advantage. Yet it begs the question of how AI is impacting our diversity, equity, and inclusion in its creations.

How is AI Used?

Artificial intelligence (AI) is a digital generator and system that uses a combined field of computer science and universal datasets to enable problem-solving or content creation. AI is also designed to include sub-fields of specific machine learning and deep data collecting to generate systems and content that make classifications or content based on input data.

Especially in marketing, AI art and writing generators can have impactful advantages to social media campaigns or promoting content. Prompt-based writing generators like Chat GPT are streamlining writing and SEO content in a fast, efficient way.

While many improvements and updates have been made since the beginning of AI in marketing, there is much work to be done in order to build a less biased and more diverse system of content.

Generalizations and biases are common occurrences in any area of social life. It’s why we rely on advanced tech and data to create unbiased, fairer decisions. Yet considering the process of structuring AI, human input and training is required to establish precise information and data to generate correct output. It’s raised the conversation of how improving diversity in AI begins with a more inclusive and diverse tech team.

How Creating Inclusive AI Starts with Building a More Diverse Foundation

Diversity at any level is increased when there’s a powerful and equitable team backed behind it. The World Economic Forum reports 78% of global professionals with AI skills are male.

To build a more unbiased solution for AI art and creation, a wide range of perspectives, experiences, and diverse thoughts is needed to create more awareness.

Recently, a report by the AI Now Institute of New York University has concluded that:

- 80% of AI professors are men

- Only 15% of AI researchers at Facebook and 10% of AI researchers at Google are women

Information in this report also reflects a larger issue specific to the computer science industry, where less than 25% of PhDs were awarded to females or minorities in 2018.

Further studies have found large tech companies and corporations severely lack representation in the workplace. A 2019 study for diversity and inclusion in tech highlighted the lack of representation for Google:

- 39.8% of employees were Asian

- 54.4% were White

- 5.7% were Latinx

- and 3.3% were Black

This non-inclusivity in tech workplaces has a direct effect of results and content generated from AI technology and platforms.

For instance, one MIT student was using a platform’s recent AI features to enhance her business headshot for a job application. Rona Wang uploaded a picture of herself smiling through an AI image generator, and prompted the app to create “a professional LinkedIn profile photo.” In a matter of seconds, the generator produced a nearly identical image, but made her complexion appear lighter and her eyes blue, “features that made me look Caucasian,” she said.

Image courtesy of the Boston Globe

The tech industry alone, specifically corporations built towards AI innovation, is in urgent need of more diverse perspectives and contributions toward accurate data. The current embedding of biased human input can impact harmful generalizations on specific groups. It’s crucial that the future of AI technology benefit all people and not just produce results from one homogenized group.

How is AI Art Impacting Cultural Diversity?

Even though AI has shown immense advantages in technological support, it’s being used to design new art forms. By integrating a new artistic form of contemporary art with innovative technology, there have been many new trends and details to come from AI art.

Today, there are plenty of tools and techniques artists have access to explore the expansive skills and creativity of AI. This includes:

- Generative Adversarial Networks (GANs)

- Image style algorithms

- Computer-aided drawing tools

- Image classification systems

- Art chatbots

Many of these AI art systems work by the user inputting the specific image or visual they would like the create. For instance, in an AI art-generating platform like Midjourney, you can create a prompt as simple as “What does a fast food worker look like?” Or an AI prompt such as, “Create a group of people gathered at the park having a picnic.”

As all AI art prompts go, the results will vary based on how specific or precise the user’s prompt details are.

Although the technology has improved since her first experiments, Black artists like Stephanie Dinkins has found themselves using runaround terms and awkward phrasing to achieve a desired visual. Whether Dinkins used the term “African American woman” or “Black woman,” AI generators depicted distorted facial features and hair textures at a higher rate than other similar prompts.

Because of this, many AI art generators can give off harmful biases of gender, race, age, and skin color that exaggerate racial depictions rather than highlight real-world representation.

Additionally, any multi-racial or interracial features may not be as accurately represented due to this insufficient data collection or input.

The Risk of Embedding Representational Harms in AI Art Generation

Bloomberg Technology performed a study with Leonardo Nicoletti and Dina Bass to assemble a mass production of AI images to generate portraits of different workers and professions. These generated images were then sorted by skin tone and gender. The results found:

- Higher paying professions such as CEO, lawyer, and politician were generated in predominantly white skin tones

- Portraits with dark skin tones were more commonly associated with lower-income professions, such as janitorial roles, fast food workers, or gas station employees.

- Additionally, doctor, CEO, and engineer roles were predominantly male-generated portraits

- Cashier, social worker, and housekeeper AI portraits were mainly represented by women

These representational harms in AI art and images degrade certain social groups and reinforce the status quo of already amplified stereotypes.

Considering how AI art generation is like any other AI model, the algorithm is based on human input and data. Without more diverse perspectives and accurate representation in this new and innovative art form, we are at risk of reinstating generalizations and stereotypes that can further harm marginalized groups. This is one of the many ways diversity and the potential for inclusive AI art are in jeopardy.

AI Cultural Barbie Case Study

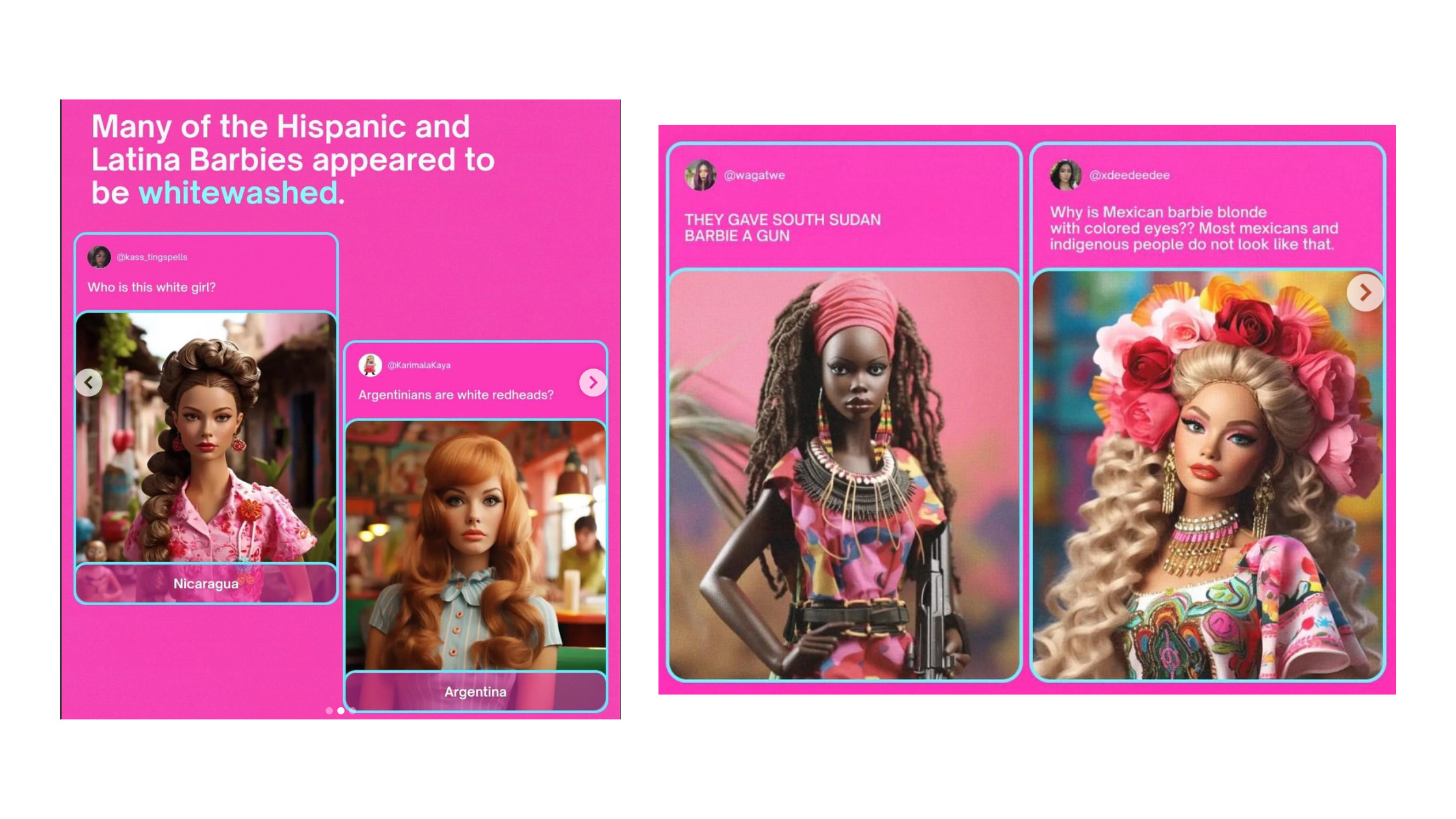

The most recent example of AI art’s lack of diversity is the AI Barbie example. You may have seen the influx of marketing hype around the new Barbie movie, and its online presence and content around the film.

Images courtesy of Impact and Buzzfeed

The online platform BuzzFeed used AI art technology to generate a Barbie from every country. From Zimbabwe to Russia, each generated AI doll portrait portrayed racial inaccuracies and representational harms that further damaged the honor of specific cultures.

Most of the generated images resulted in whitewashing of races, as well as incorrect cultural outfits. For instance, a few Middle Eastern Barbies wore a ghutra, a traditional headdress for men.

Images courtesy of Buzzfeed

The blatant racism and endless cultural inaccuracies to come with these examples of AI art generation have further raised the concern for racial bias and representational harm in this new wave of digital art.

What Will the Future of AI Art Look Like?

For the cannabis industry and many other corporations, there is strong attention to focus on diversity and inclusion, considering the many decades of injustice and inequity for marginalized communities. With the introduction of AI generation in our society, it’s imperative to also focus on the most inclusive and unbiased structure possible.

Even with the leaps and bounds AI technology has achieved in a short amount of time, there is still much improvement to go. In order to establish a successful and inclusive community supported by AI technology, more research and innovation will need to stem from diverse perspectives and inputs.

Similar to the cannabis industry, the future advancement of AI is a powerful opportunity to make a clear, correct, and inclusive path toward diversity in our society.

From dabbing to driving tests, AI is also quickly becoming an integral part of the multi-faceted new cannabis industry. Dispensary software and POS systems are including AI generators to provide a more customized experience for shoppers. Technology with artificial intelligence is even assisting cultivators in controlling the climate and environment of their grows, as well as tracking and analyze intricate data from the grow. Much like the aspects of cannabis, the inclusion of AI technology will continue to grow in tandem with the advances and innovations this industry is poised to bring.

We must examine the opportunity that AI could bring, and the advantages it can play into our marketing campaigns, versus the clear risks it could project onto cultural groups through inequitable and uninclusive content.

AI does not need to be perfect, but it does need to provide an accurate and unbias example and representation that is separate from our own human, societal leaning and prejudice.

Build a Less-Biased and More Inclusive Industry with CCG x DEI

These ongoing AI improvements and updates from helpful marketing tools and social apps are advancing each day, creating more opportunities for content surrounding your brand.

At Cannabis Creative, we believe our differences are what make us stronger – and we’ll never back down from protecting our people. While a cannabis marketing agency cannot undo the decades of systemic oppression put in place by the War on Drugs, or the representational harm caused by corporations with a lack of diversity, we can take responsibility for creating the change we want to see.

Cannabis Creative Group is proud to launch its Diversity and Inclusion Committee, working to explore new initiatives and discover educational opportunities that collaborate with diversity partners in our community. We’re dedicated to championing efforts towards more inclusive equal opportunities for all communities.

Get familiar with our DEI Committee and learn more about Cannabis Creative’s efforts towards DEI on our blog page.